For universities, the concern was immediate: if AI can write fluently, unpredictably, and in discipline-appropriate academic language, does detectability still hold?

Early results show that it does.

How StrikePlagiarism responds to GPT-5.2

The release of GPT-5.2 reinforced a broader challenge facing higher education: AI development now outpaces institutional policy cycles. For StrikePlagiarism, this moment required immediate empirical validation rather than theoretical assumptions.

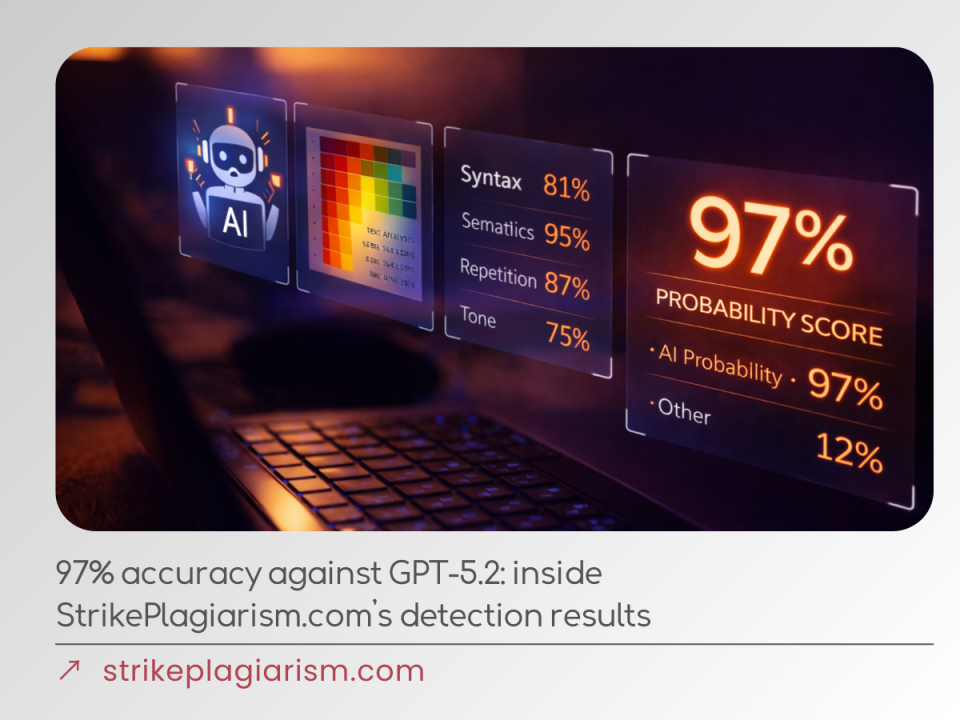

Within days of GPT-5.2 entering academic use, StrikePlagiarism.com was tested against newly generated and paraphrased GPT-5.2 texts under realistic academic conditions. The results were unambiguous:

- Over 97% detection accuracy across GPT-5.2 outputs

- False results below 1%, preserving academic fairness

- Consistent performance after paraphrasing and stylistic diversification

Rather than relying on surface-level markers, StrikePlagiarism.com analysed behavioural consistency across longer academic texts — identifying patterns that remain statistically improbable in authentic student work. Reports delivered probability-based, side-by-side comparisons, providing educators with interpretable evidence rather than automated verdicts.

Why GPT-5.2 remains detectable

GPT-5.2 demonstrates strong control over academic conventions and avoids obvious repetition. However, analysis across extended submissions consistently revealed:

- non-random reasoning structures,

- unusually uniform transitions between claims,

- absence of natural cognitive drift.

Individually, these signals are subtle. Taken together, they form a measurable behavioural profile. Detection no longer depends on awkward phrasing or stylistic errors, but on identifying improbably stable reasoning across complex texts. Fluency improves — invisibility does not.

Core advantages of StrikePlagiarism.com’s AI detection approach

StrikePlagiarism.com was designed to support institutions operating at scale, across disciplines and languages:

- Multilingual AI-content detection at scale

AI-generated content is detected across 100+ languages, enabling consistent integrity standards in international and multilingual academic environments. - Proven accuracy against advanced generative models

Detection accuracy exceeds 97%, including paraphrased and stylistically diversified GPT-5.2 texts — demonstrating reliability under real academic conditions. - Ultra-low false-positive rates

False results remain below 1%, protecting students from incorrect attribution and ensuring that detection strength never compromises fairness.

Why AI detection is critical right now

GPT-5.2 makes one reality clear: the primary risk for universities is no longer obvious AI misuse, but large volumes of academically convincing AI-generated work entering assessment unnoticed. This is not a future concern — it is a present operational challenge.

StrikePlagiarism addresses this challenge at an institutional level. By combining high-accuracy AI behaviour analysis with transparent, probability-based reporting, StrikePlagiarism.com enables universities to respond now, not retrospectively. When academic decisions must be defensible at the moment they are made, evidence-based AI detection becomes essential infrastructure rather than an optional safeguard.

comment