Many potential students and their parents are questioning the need to invest time and money in a university degree. But these arguments overlook the fact that history rarely records cases of outright replacement. More often, technology enables humans to achieve more than before – if they are well educated, that is.

The generative artificial intelligence (GenAI) revolution is a call not to abandon higher education, but to rethink its purpose. Degrees, especially at the graduate level, are more important than ever because they develop the human capacity for extrapolation – the ability to extend beyond what machines already know.

Interpolation v extrapolation

AI models learn from vast amounts of text, enough to keep a human reading non-stop for thousands of years. They use mathematical strategies to infer words that fit common patterns. This is not genuine thinking; it is mostly copying what has been seen before. It is, in essence and design, interpolation.

- AI won’t replace qualitative researchers – it might help them

- Why GenAI helps some students but not others (and what to do about it)

- When GenAI makes answers cheap, assessment must value judgement

Picture all known and openly explicit facts known by humans as dots on a graph. Interpolation would be the operation of connecting dots in crowded areas – like AI filling in blanks from past examples, such as suggesting email replies or spotting trends in sales data.

Extrapolation means drawing lines into empty spaces inferred from data in nearby crowded areas – like guessing what happens in brand-new situations, where humans use basic rules or principles to create fresh ideas.

GenAI is excellent at interpolation, but weak at extrapolation. It might produce hallucinations when the training data is thin or non-existent in the region we wish to explore. We can trick GenAI with clever questions. Experts agree that GenAI will improve, but humans will always hold superior value through innate creativity, empathy and critical-ethical judgement – qualities continually enhanced by advances in AI and robotics, yet never entirely displaced as the central drivers of progress. For now, and into the future, GenAI remains a helper, not a master.

Training effective extrapolators

If higher education is to train effective extrapolators, educators must create the conditions for that skill to flourish. GenAI may handle information; it’s up to us to shape how students reason with it. Here, we’ll outline three strategies to prepare graduates for intelligent coexistence with GenAI.

The human override: discipline-specific extrapolation

Educators can build the capacity to override GenAI by training students to check outputs, challenge assumptions and add human insight. This equips graduates to use GenAI as a partner rather than a substitute. The machine can propose quick answers, but humans must verify, contextualise and make responsible decisions.

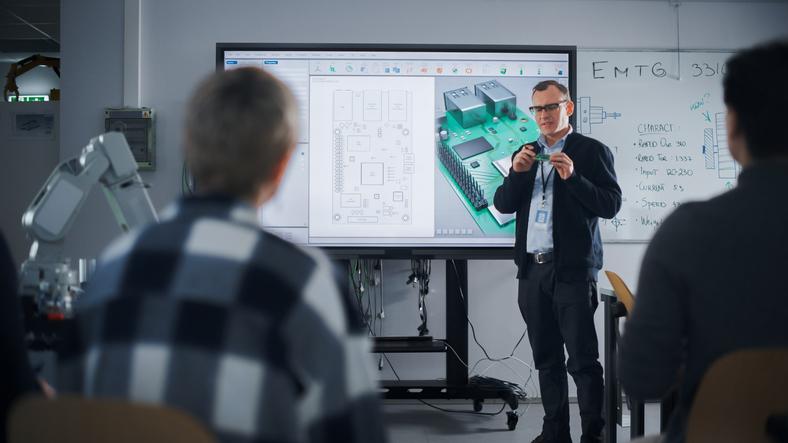

This principle works across disciplines. Mechanical engineering offers a clear example. GenAI can rapidly generate design alternatives or simulations, but it cannot judge whether a solution survives real-world constraints. A trained engineer must apply first principles, such as physics, safety factors, materials limits and manufacturability, to select and refine what GenAI proposes.

One practical way to embed this in teaching is to require students to audit GenAI outputs against first principles. Students might ask GenAI to propose a lightweight bracket design or summarise design options, but the assessed work begins with a structured human check: stating assumptions, verifying units, identifying boundary conditions and testing feasibility against safety factors, fatigue limits, manufacturability and cost. A short justification memo can then explain what the student accepted, rejected or modified, and why. In effect, GenAI becomes a junior assistant while the student is trained and graded on judgement.

Interdisciplinary collaboration within human-AI teams

Higher education has long valued interdisciplinary collaboration. In the current era, collaboration takes on a new twist: graduates must still learn to work effectively with others, but also develop a new competency in collaborating with an artificially intelligent team member. GenAI should be treated as a companion and co-pilot that can accelerate routine steps and offer useful critique, but not as an authority. Instead, it works best as an untrusted junior collaborator whose outputs must be checked, challenged and refined through senior human judgement. Instead of opposing AI, universities can assign group tasks that use GenAI openly.

Stay true to the principles of scepticism

As GenAI tools become more capable, their authority is becoming more ingrained – and not always for good reason. Students should be trained to question its outputs, especially when dealing with novel problems. The more certain an AI seems, the more a trained extrapolator should investigate – cross-checking assumptions and applying first principles to turn potential errors into innovations.

Educators can embed this scepticism by making verification a required, repeatable routine in coursework, not an optional attitude. Students must identify the assumptions GenAI is making, test an edge case where the answer might fail, and test-proof the result with an alternative model, or a small empirical check. In a way, educators can use what human testers of large language models do routinely: students can be given a plausible GenAI output that contains subtle errors and are graded on finding and correcting them.

The future belongs to the extrapolators

Geoffrey Hinton, the 2024 Nobel laureate in physics, warned: “We have no expertise in what it’s like to have things smarter than us.” GenAI may master information and data, but not imagination and critical thinking. Higher education remains humanity’s best means of training humans to use AI to tackle the world’s problems. If we can prepare students for critical, ethical and creative coexistence, they will not just survive the AI era; they will define it.

The future belongs to the extrapolators – those who can imagine, reflect and act with the compassion and ethics that machines lack.

Darkhan Bilyalov is assistant professor at the Graduate School of Education and Luis R. Rojas-Solórzano is associate provost for graduate studies at Nazarbayev University.

Disclaimer: Authors used AI tools to support the writing process – Grammarly, ChatGPT and Grok xAI for proofreading, language correction and fact-checking. All ideas, interpretations and recommendations reflect the authors’ original work.

If you’d like advice and insight from academics and university staff delivered direct to your inbox each week, sign up for the Campus newsletter.

comment