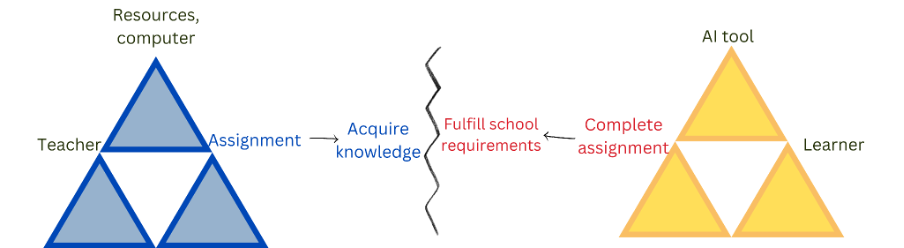

As educators, we are navigating a misalignment of intention and outcomes in our classrooms. When we design an assignment, our intention is clear: we want students to engage in the intellectual operations necessary to develop new competencies. However, with the advent of generative artificial intelligence (GenAI), a student’s motivation can easily shift to simply completing the assignments to fulfil school requirements.

If students use AI for task completion or performance, they bypass the very learning process we have designed for them. The core challenge we face today is not just preserving academic integrity; it is preventing the erosion of human intellectual agency.

If we want learners to play an active, critical role in seeking and constructing knowledge, how do we integrate AI without surrendering this capability to the machine?

- How business schools can turn AI from ‘threat’ to ‘sustainability enabler’

- GenAI as a teaching colleague in assessment: a case study

- Show students what thoughtful engagement with GenAI looks like

Framing the challenge through activity theory

To understand this issue, I used the lens of Engeström’s activity theory. Any learning activity comprises subjects (students), tools (in this case, AI) and objects (the learning goal). When AI is used merely as an answer generator, it short-circuits the activity system. The human cognitive effort is minimised. If students rely on AI for task completion, prioritising efficiency and speed over deep contextual understanding – that is, durable “slow” knowledge – they risk reducing human intellectual operations. We must consciously design our teaching to prevent learners from bypassing learning.

To preserve this intellectual agency, we need to stop treating AI as a monolithic tool and instead guide students through purposeful pedagogical engagement with it.

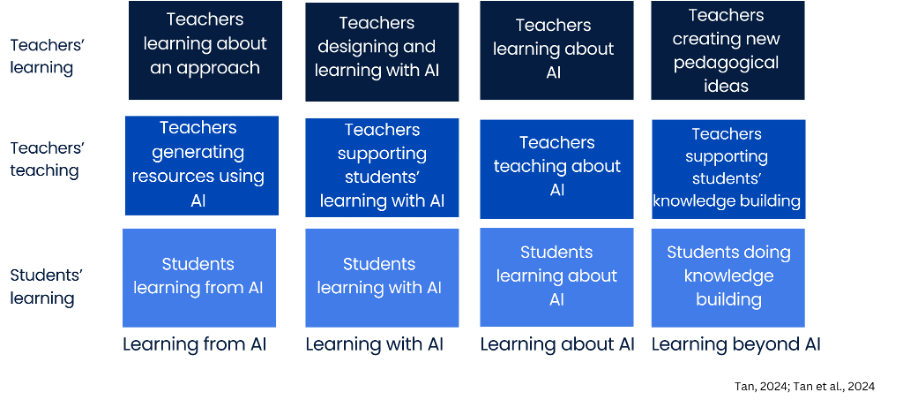

The four dimensions: learning from, with, about and beyond AI

In my work, I advocate for a four-dimensional framework to help educators map how students interact with AI, shifting the latter from passive consumers to active creators.

1. Learning from AI

Learning from AI is the baseline. Here, it acts as a tutor or a repository of information. The student is primarily a seeker of knowledge. Effective learning can take place in this dimension if students have the self-directed learning competency and actively process AI-generated information. While this aspect is useful for foundational understanding, stopping here limits the student to passive consumption and increases their risk of adopting hallucinations as facts.

2. Learning with AI

Here, learning with AI introduces pedagogical scaffolding. The tool is no longer just giving answers; it is a cognitive partner. Pedagogically, we are using AI to support the students’ learning within their zones of proximal development (ZPD), the spaces between what they can do with assistance but cannot without. This “intellectual partner” should provoke deeper enquiry and act as a sounding board that extends the learner’s own thinking processes rather than doing the thinking for them.

3. Learning about AI

To maintain agency, students must be AI literate. They need to understand the mechanics, biases, limitations and ethical implications of the tools they use. They also need to know how to use AI for effective learning and why delegating thinking to AI will be detrimental to their own learning. You cannot exercise agency over a tool you do not fundamentally understand.

4. Learning beyond AI

The ultimate goal of the framework, rooted in the pedagogy of knowledge building, is to take learning beyond AI. At this stage, the goal shifts from individual knowledge acquisition to collective idea improvement and knowledge-creation capacity. AI might help synthesise information or spark a novel connection, but the heavy lifting of advancing community knowledge, evaluating nuance and creating new paradigms remains a collaborative, human endeavour.

Implementation: the teacher as the first learner

Before we can effectively guide students through this continuum, educators must experience it themselves. In a recent pilot, we introduced a knowledge-building learning companion for teachers – it looks like a discussion forum but is AI enhanced. We wanted to see what happens when educators use AI to design lessons.

The preliminary findings were revealing. Educators reported that the AI was a powerful “brainstorming partner”. Rather than generating a generic lesson plan, the AI companion triggered deeper reflection on their own instructional practices. It helped them contextualise their lesson designs and provided a space for spontaneous reflection.

However, practical “disturbances” in our activity system also emerged:

- The competency prerequisite: The idea that AI thinks for you is a misconception. In reality, educators found that to use the companion effectively, they needed to have a good understanding of pedagogy and sound content knowledge. You cannot guide an AI tool if you do not know where you are going.

- Conversational limitations: The technology is not all knowing. Educators noted that conversations with the AI could sometimes “go round in a circle”. If a planning session was protracted, the AI would occasionally lose its “memory” of earlier context, so the user may have to repeat an idea to “remind” the tech.

- The need for strategic depth: While the AI efficiently generated a high volume of ideas, educators craved deeper pedagogical sparring. They wanted the AI to push beyond surface-level suggestions and cater more deeply to the rationale and overarching strategy of the lesson.

These are fundamental pedagogical tensions. Navigating them, by understanding the AI’s limitations and learning how to steer the technology strategically, is what “learning with” and “learning about” AI look like in practice.

Key takeaways for educators

Moving students’ AI use from performance to intellectual agency requires deliberate design. Here is how you can begin shifting your practice today:

- Redesign for epistemic agency: Audit your assignments. If students can use AI to entirely bypass a task, it needs to be redesigned. Shift the assessment focus towards activities where students must critique, adapt, contextualise or build upon AI-generated outputs.

- Scaffold working “with” and “beyond” AI: Do not assume students know how to collaborate with AI. Explicitly model the process. Show them how to prompt AI as a sparring partner (learning with), and ensure that you design final projects that require collective, human-driven consensus and innovation that the AI cannot reach on its own (learning beyond).

- Start as a companion: If you are hesitant about integrating AI into your classroom, start with your own work. Use an AI tool as a pedagogical companion for lesson design. Experiencing the tensions of trust and agency first-hand is the best preparation for guiding your students through the same complex landscape.

Ultimately, teachers should not compete with AI’s ability to produce fast answers. The real work of education remains the same: fostering our students’ intellectual agency. If we are deliberate about how we scaffold their use of these tools, moving them from simply seeking information to engaging in genuine and collective knowledge building, we ensure that the cognitive heavy lifting stays exactly where it belongs: with the human learner.

Tan Seng Chee is the associate vice-provost (education transformation) and the provost’s chair in education at Nanyang Technological University, Singapore.

This is an edited version of “From performance to intellectual agency: a four-dimensional framework for AI in education”, which was first published on the blog of NTU’s Institute for Pedagogical Innovation, Research and Excellence.

If you would like advice and insight from academics and university staff delivered direct to your inbox each week, sign up for the Campus newsletter.

comment